To AI, you look like your competitors.

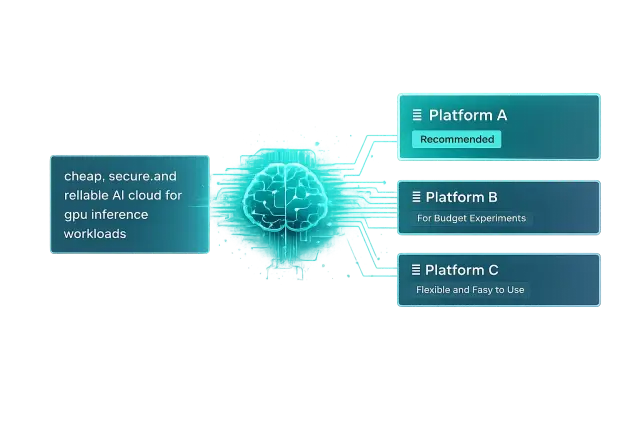

If asked 'Best GPU cloud for AI workloads', AI responds with a list of similar options.

You become just another option.

GEO FOR AI & DEVELOPER PLATFORMS

If asked 'Best GPU cloud for AI workloads', AI responds with a list of similar options.

You become just another option.

Docs, blogs, architecture guides, case studies - all there. But AI can't tell which piece answers which buyer question.

Your content was built for reading - not for recommendation.

One or two platforms get framed as the best fit, with clear reasons. The rest? Just alternatives.

If you're not the pick, you're barely considered.

Most GEO tools test in ChatGPT, Gemini, and Perplexity.

Are your users using Claude?

They don’t all narrow a category the same way. Some converge fast on a few names. Others preserve more ambiguity.

One rewards comparisons. Another rewards authority. Third rewards specificity. What works in one does not work in another.

The same buyer query can lead to different recommendations because the models do not retrieve, read, or rank the market the same way.

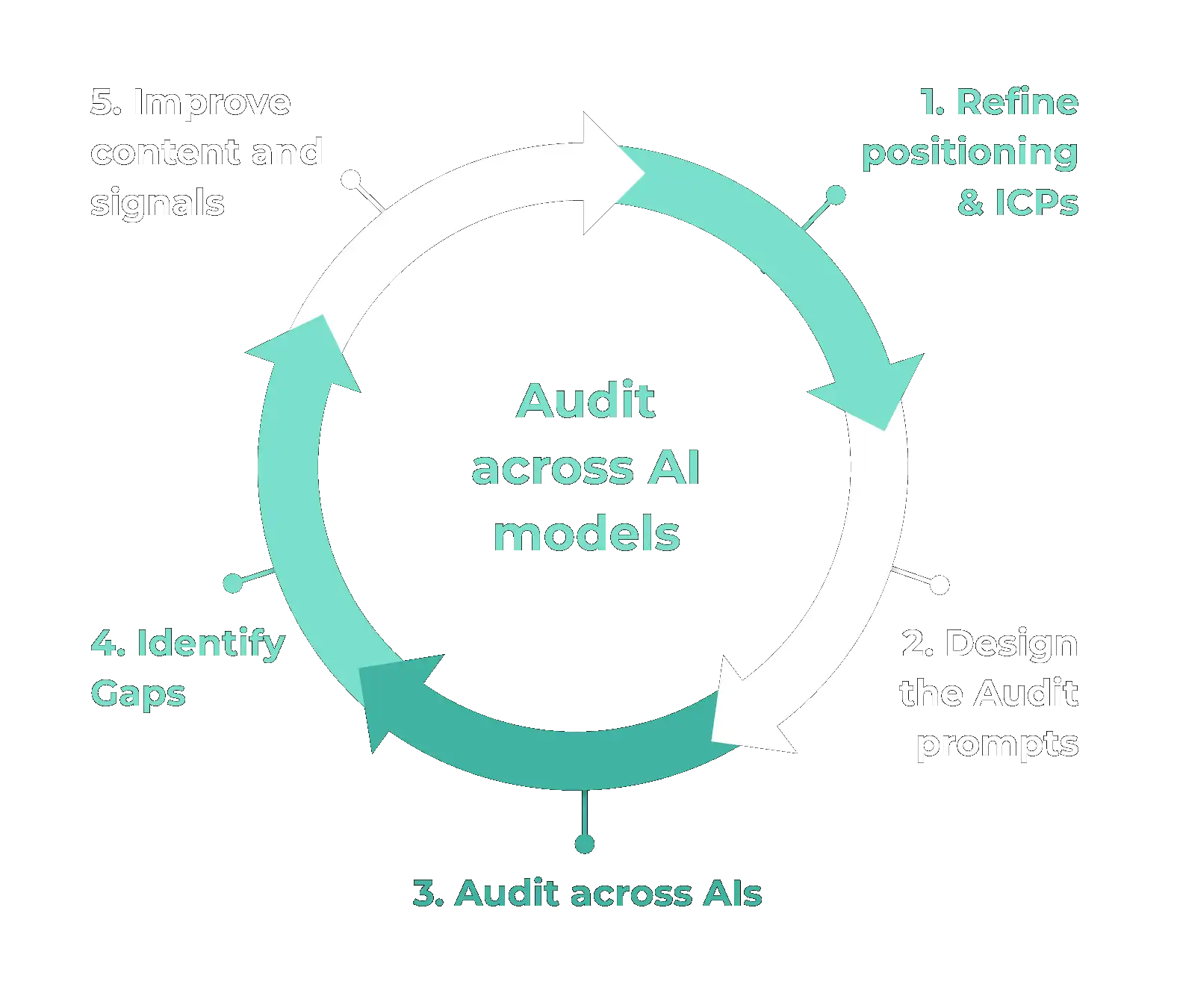

We establish how AI engines understand your company, which competitors appear beside you, and where your strongest use cases are being missed or misframed.

This shows exactly where the visibility and positioning gaps are.

We reshape your website, docs, comparison pages, and external proof so AI engines can connect your product to the right buyer questions.

Not more content. Clearer positioning, sharper answers, stronger authority.

We track how your brand appears in AI answers, which prompts you win, where competitors outrank you, and how the framing changes over time.

We scale what works and adjust what does not.

Most platform companies already have developer relations, content, and marketing people doing good work. We don't replace them. We add the layer they don't have. Someone focused entirely on how AI engines understand, describe, and recommend your product.

We are platform architects turned GEO specialists. Our founding team comes from Cisco, IBM, Nokia, Amazon and Infinera, with decades of building systems at scale before we started building this.

We start with an audit. We test structured prompts across your buyer personas and their actual questions, across Claude, ChatGPT, and Gemini. It shows you exactly where you appear, how you're framed, and where your competitors are winning the recommendation.

Request an Audit